Previously I wrote about how we should be treating our text data as Text and not try to shoehorn this data into a rowset solution. Once we start treating our text as text we can start to unlock the hidden value of the text. This hidden value can and should lead to improved business processes, actionable insights and a strategic advantage for your business. One of the first tools of the trade you need to understand is Bag of Words (BOW).

Bag of Words

In its simplest form, BOW is a list of distinct words in a document and a word count for each word. BOW is a simple model to represent text as a numerical structure. Consider the term “document” to be any text you can access regardless of the format, from text in a word document to just a standalone string variable. I leave it up to you to extract the text from whatever format you are working with.

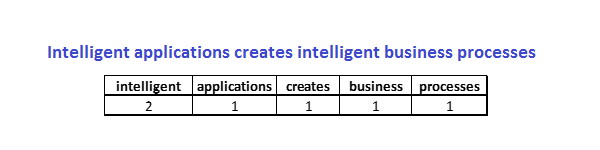

Let’s look at a simple document.

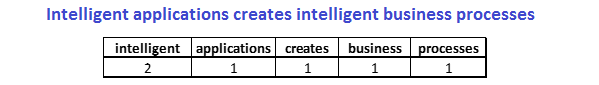

Intelligent applications creates intelligent business processes.

The BOW representation of this simple document is:

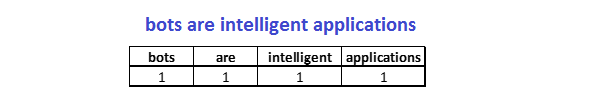

For the text: “Bots are intelligent applications” the BOW representation is:

The counts we assign to each word is not required to be the actual word count. The numbers are really just term frequencies. Term frequencies can be calculated in many different ways depending on your requirements. For our example we use the very common definition which is simply the number of times the term appears in the document (term count).

Tokenizing

It is easy to look at the simple examples and determine the term frequencies. In a real-world example there must be some automation to determine these frequencies. This process is known as tokenization. Tokenization splits the document into words. How you choose to tokenize is implementation specific. I simply tokenized on white space and lower-cased the tokens and removed any punctuation ( using the power of my mind). You generally do not have to create your own tokenization algorithms. There are many packages and libraries available for almost any languages. Unless tokenization is your hobby, I suggest you leave it to an existing package or service to do the work. There are many nuances to consider when you create a tokenization process.

BOW is a simple model that allows us to represent a document as a numerical structure. It does have its issues. Bag of Words is a simple model and it is not the end-all-do-all structure. At best the model keeps the words and the term frequencies ( how ever you decide to calculate term frequencies) at the expense of losing context and order. A document can be represented as a BOW but you most often cannot convert a BOW back to the original document. It does allow us to look at the document as a numerical representation which is useful for other text analytic processes.

Next Up

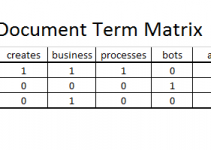

Now you know how to represent a text document as a numerical structure. This is the starting point when working with text data. In the next few posts I will build on BOW with document term matrix and a little more on term frequency calculations.